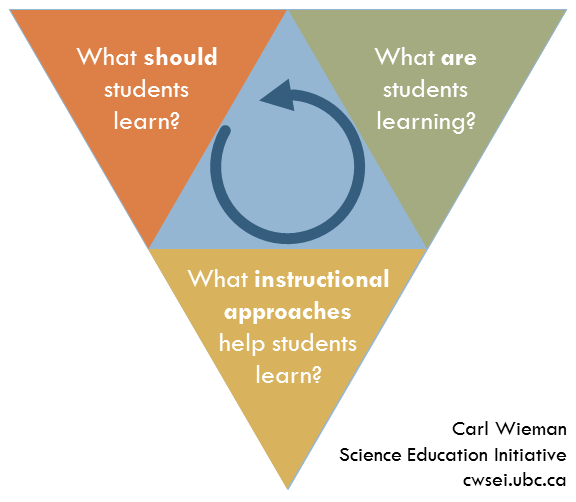

I’m a little scared to estimate the following number: how many times I’ve welcomed students to the first class of the term. It’s around 40, I think. Over the years, I’ve changed what I say and do in that first class. I used to spend a lot of time going over all the details of the syllabus. Yawn. After working with instructors via the Carl Wieman Science Education Initiative (CWSEI) at UBC and the Center for Teaching Development at UC San Diego, and reading these great resources, First Day of Class and Motivating Learning (both PDFs from the CWSEI), I do things differently now.

Yesterday, I tried something new in my teaching and learning class, The College Classroom. For the first time ever (for me), I did an “ice breaker” activity. You know, one of those activities where the students get to know each other. I want to describe why it took me so long to do it, what I think ice breakers can (should?) do, and what I actually did yesterday.

Ice-breaker activities make me uncomfortable. I don’t like striking up conversations with strangers, in class or anywhere. I’d rather stay quiet and anonymous. And so, I never asked my students to do it.

The educator in me knows, however, that there are incredible benefits to working and learning with others. So called “social constructivism” says students need to construct their own knowledge based on their own backgrounds, skills, experiences, and motivations and that construction is a whole lot easier when you do with your peers. It’s the basis for peer instruction, the activity where the instructor poses a conceptually challenging, multiple-choice questions, students think about it and vote with a clicker, discuss their understanding with their peers, in some cases vote again, and then participate in an instructor-moderated, class-wide discussion. Peer instruction is something I use in The College Classroom (and also something I teach in The College Classroom. Sometimes that gets confusing.)

I’m also keenly aware, through my association with the Center for the Integration of Research, Teaching and Learning (CIRTL) Network, of the importance of learning communities. I want my classroom to be a learning community where people of different backgrounds and interests can come together to learn. Sparking and then maintaining that learning community is one of my responsibilities as the instructor.

Okay, the pieces are starting to come together now: social constructivism, peer instruction, sparking a learning community, the first day of class, motivating learning,…

Aha! Let’s start the class with an icebreaker activity. Not some forced and awkward activity that makes people uncomfortable. Let’s do something that’s relevant to the class and initiates the kinds of interactions I want to choreograph in every class that follows.

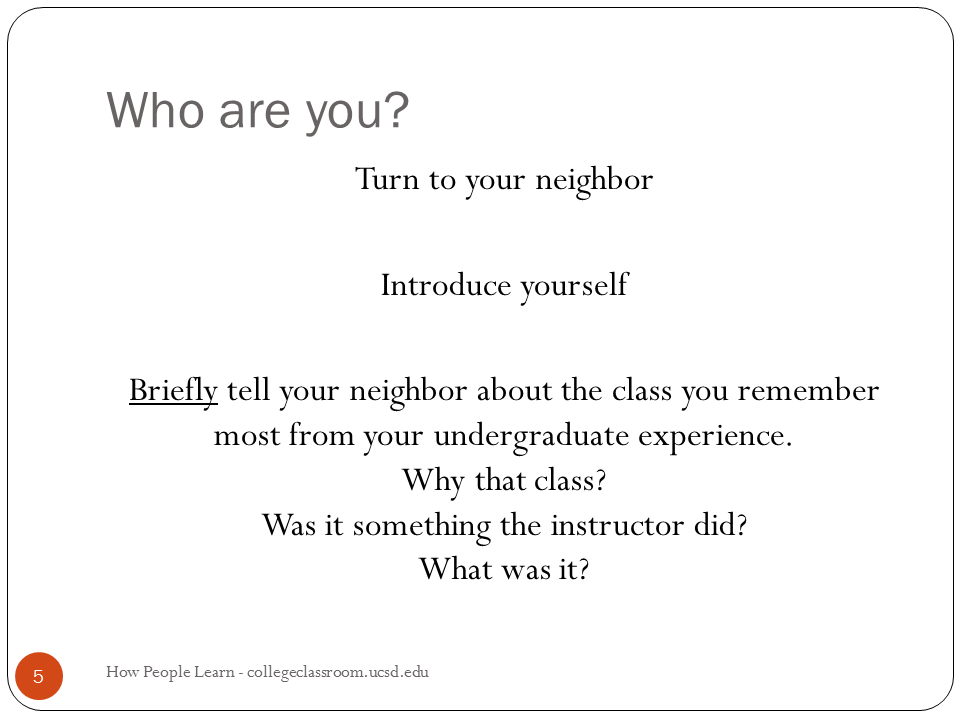

Here’s a slide from near the beginning of my first day’s slidedeck:

And here’s what happened: the room erupted in conversation. It was seriously loud. Okay, going great, going great, uh-oh conversations are dying off, time to do something Peter, what are you going to do Peter to make this useful, do it now before you lose them, do it now, Do It Now, DO IT NOW.

I asked for people to share a few of their stories. Good experiences and bad. I wrapped up the discussion by highlighting all the different factors that made those experiences memorable, factors like

- student motivation

- the instructor’s enthusiasm

- the instructor’s skill as a teacher

- the relationship between the student and the instructor

These are what I — we — will teach and learn in this course, and also how we’ll teach and learn in this course.

So, I’m converted. I’ll do icebreakers from now on. But not just any ol’ icebreaker. It’s got to be something that’s relevant to the class and with a purpose other than getting students to introduce themselves. It shouldn’t be this awkward, uncomfortable, artificial interaction but rather, something the students will continue to experience in every class that follows.

What about you? Do you run icebreaker activities in your class? What do you do (and why?)