(This is a long, detailed post about creating and running a “jigsaw” activity. Mostly, I wrote it for myself before I forget all the details. Reinventing the wheel is bad enough – reinventing your own wheel is even worse!)

The other day, I ran a jigsaw activity in my teaching and learning course. Jigsaw’s are a great activity if you have a lot of content to cover in a number of contexts. My colleague, David J. Gross at UMass Amherst, explained it to me this way: Suppose your lesson is about 5 National Parks. A traditional lecture about those 5 Parks, with N PowerPoint slides giving the details about each Park means 5N slidezzzzzzz.

Here’s how a jigsaw activity works. In Step 1, you group students together, with each group exploring one National Park. They become the local experts on that Park, working together to bring themselves up to shared, higher level of knowledge:

In Step 2, you take it all apart and put it back together, like a jigsaw puzzle, so that each group has an expert about each of the 5 National Parks. In each group, they teach each other about each Park. In the end, every student has learned about each Park.

Did you notice how much lecturing about National Parks the instructor did? Zero. Zippo. Zilch. Instead of a single long exposition by the instructor, there are 4 student-centered conversations happening in parallel. It might even take less class time, or, if the time is already allocated, it gives more time for each National Park.

Cool, huh? Instructor gets to do nothing!

Well, nothing except a whole lot of planning and choreographing so students can stay engaged in concepts and not wondering what to do or wandering around looking for a group.

My jigsaw: Formative assessment that supports learning

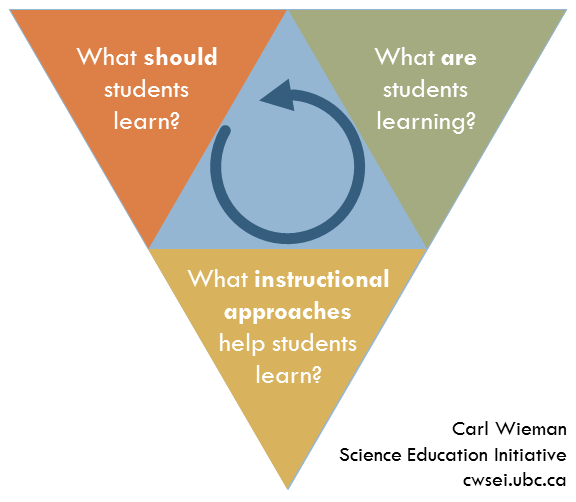

In my teaching and learning class, we were discussing practice and formative feedback that supports learning. Following Chapter 5 of How Learning Works, instructors should ensure

- practice is goal-directed

- practice is productive

- feedback is timely

- feedback is at the appropriate level

To help explore these characteristics, I decided to use two tools:

analogy: How People Learn advises us that “students come to the classroom about preconceptions about how the world works” (p.14) and therefore, “[t]eachers must draw out and work with that preexisting understandings that their students bring with them.” (p.19) I wanted my students to think about those 4 characteristics first through their experiences of a sport or hobby and then in the context of teaching and learning.

contrasting cases: Again from How People Learn, “[t]eachers must teach some subject matter in depth, providing many examples in which the same concept is at work and providing a firm foundation of factual knowledge.” (p. 20) Contrasting cases are a way to present the same concept twice. And sometimes, the a good way to figure out what something IS, is to figure out what it’s NOT.

For each characteristic, like timely feedback, I wanted students to come up with scenarios of

- untimely feedback in a sport/hobby experience (“bad, sport/hobby”)

- timely feedback in a sport/hobby experience (“good, sport/hobby”)

- untimely feedback in teaching and learning (“bad, teaching and learning”)

- timely feedback in teaching and learning (“good, teaching and learning”)

That’s 4 characteristics x 4 scenarios each = 16 different scenarios in total. There’s NO WAY I’m going to make 16N slides and flick through them.

Let’s jigsaw, I said to myself. But how? How do I choreograph Step 1 (prepare expertise) and Step 2 (share expertise)? I started from the end and worked backwards.

Here’s what I wanted the Step 2 conversations to look like:

Each group would have one student sharing expertise about one of the characteristics

- practice is goal-directed (green)

- practice is productive (blue)

- feedback is timely (purple)

- feedback is at the appropriate level (orange)

and each student would be prepared to share 4 scenarios

I. “bad” in sport/hobby

II. “good” in sport/hobby

III. “bad” in teaching and learning

IV. “good” in teaching and learning

I have about 20 students in each session of the class, so that means I’ll have 5 groups at the end. If there are additional students #21, #22, and #23, they can double-up in some groups. As soon as I have 24 students, #21 thru #24 can form their own discussion group.

Look back at the picture of the final discussion groups showing Step 2 of the jigsaw activity. To create that (5 times), in Step 1 I’ll need 5 people teaching each other about green, 5 blue, 5 purple, and 5 orange.

Choreographing with Colored Paper

There’s a lot of “structure” that needs to be built into this activity

- each student is assigned to a characteristic / color

- each student needs to know what their Step 1 discussion is about

- students need to sit in a one-color groups for Step 1

- students need to move to an every-color groups for Step 2

- probably more…

I can’t waste a lot of time making this happen during class. What tools do I have at my disposal for structuring this activity? COLORED PAPER (As simple as it sounds, colored paper is one of my favorite pieces of education technology.)

I created 4 worksheets, one for each characteristic, and copied them onto colored paper. I interlaced the worksheets and put the stack at the classroom door. I arranged the tables and chairs into 4 stations with 5-6 chairs each, and placed a colored sheet of paper on each station [Oh yeah, I forgot about that! That’s why I’m writing this.] When the students entered, they took the top worksheet and sat at that color’s station.

The ultimate goal is for us to have a class-wide discussion of good teaching practices to support learning. The jigsaw activity should prepare every student to contribute to that conversation but I didn’t want students to spend too much time in Step 2 sharing their experiences and ideas about sports/hobbies and about “bad” teaching practices. I also wanted students to discover how intertwined those 4 characteristics are: to provide productive practice, you need it to be goal-oriented, and so on.

I needed a way to slice and re-mix the scenarios so the students discussed them by scenario (“bad” in sport/hobby,…,”good” in teaching and learning) rather than by characteristic (practice is goal-directed,…, feedback is at the appropriate level). So that’s exactly what I did: I sliced. Well, they sliced.

If you look at the picture of the worksheets above, you’ll notice some dashed lines. At the end of Step 1, I instructed the students to tear their colored worksheets into quarters along the dashed lines. (Notice, also, each quarter has a I, II, III, IV label.) Then I invited them to re-organize themselves into groups so that each group had a representative of each color. That was easy for them to do because they could easily see what colors were already at each table. Since there were equal numbers of each color (because the worksheets were interlaced in the stack at the classroom door) there was a place for everyone and everyone had a place.

Settled in every-colored groups, they worked their way through the 4 scenarios I, II, III, IV of practice and assessment that supports learning. I could easily see what scenario they were discussing and could nudge them towards the important, scenario IV discussion if they were lagging behind.

Darn, I forgot to keep track of the time while I ran this jigsaw but I seem to remember it taking about 20 minutes for Step 1 and Step 2, and then another 10 minutes or so for the class-wide discussion about the characteristics of formative assessment that support learning (scenario IV).

The classroom was loud with expert-like discussions about teaching and learning. Twenty brains were engaged. Twenty students left knowing a lot about practice and assessment that supports learning. And knowing that their own experiences and knowledge played a critical role in the learning of their classmates. They can ask themselves,”Did I contribute to class today? Was the class better because I was there?” Yes and yes.

Big question: why bother?

If it took me this long to write down on these steps, you know it took even longer to design (and re-design) the materials, plan and rehearse the choreography, prepare the materials, re-arrange the classroom furniture, and more. It would have a been a helluvalot easier for me to present 4 slides, one on each of the characteristics of formative assessment (or easier still, one slide with 4 bullet points.)

But that’s not what we do.

Of course there are practical considerations but how easy it is for ME is not what drives how I design my lessons. Rather, I challenge myself to create opportunities for EVERY student to practice thinking about and discussing the issues and concepts. One thing I love about these jigsaw activities is that every student has a well-defined job (share their expertise in Step 2) that gives them the opportunity to make critical contributions to the discussion. The steps of the jigsaw and all the colored-paper-driven activities prepare them for that discussion.

I’m happy to share the resources shown here, talk through any points that are unclear, chat about how to adapt it to your learning outcomes – leave a comment, email me at peternewbury42 at gmail dot com, or hit me on Twitter @polarisdotca.