I went to a day-long retreat where the participants, about 20 of us, were deliberately selected to represent a wide range of backgrounds, experiences, and expertise – all the stakeholders in big project. The retreat organizer suggested each person prepare a 5-10 minute presentation about what they’ll bring to the project and what they’re hoping to get out of it. I was there to represent the teaching and learning support my center provides to instructors.

I had nightmar—, uh, visions of participant after participant clicking through PPT after PPT. The educator in me didn’t want that to happen so I decided to do something active to give my colleagues a better understanding of what I do. They would experience it rather than listen to me describe it. You know, active learning.

(For the record, PPT after PPT was NOT what happened. People talked and distributed some hand-outs. Better that I was prepared, though.)

That’s when I remembered a really interesting and engaging activity I did during a workshop from Kimberly Tanner: card sorting. The idea is, you give each group of 2-4 participants a short stack of cards. Not playing cards but, for example, 9 index cards, one item on each card. In Kimberly’s workshop, the cards were 9 different superheroes. You ask the groups to sort the cards into categories — any categories they want — with just a couple of rules: there has to be at least 2 categories; there can’t be 9 categories (ie, you can’t put each card in its own category.) Well, there are more rules but that’s all I needed for my version.

Then something interesting happens. You’ve carefully chosen the cards so that the items have both surface features (these are superheroes with primarily green costumes, these are mostly blue, these mostly red) and deep features (these are Marvel superheroes, these are DC.) How people sort the cards reveals their level of familiarity and expertise with the content, and gives each participant ample opportunity to share that knowledge with their group-mates.

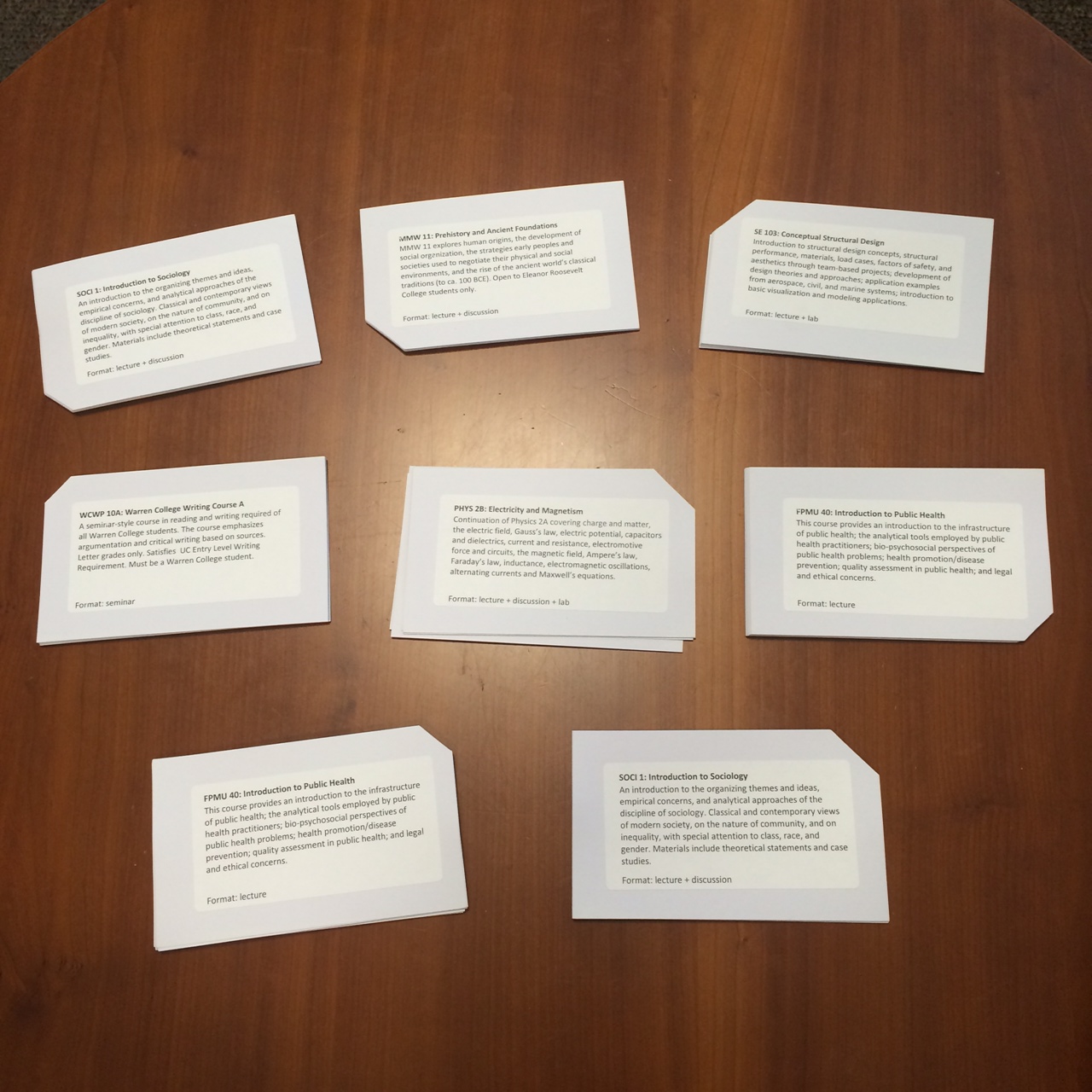

Back to my card sorting task: I made 9 cards, each one giving the name of a course, the course description, and the format of the course meetings (lectures, labs, discussions, seminars, online, etc.) Thanks, btw, to my colleague Dominique Turnbow for the great advice about what to put on the cards.

So, 9 courses. Please sort them into more than 2 but less than 9 categories:

There were lots of surface features that could be used:

- STEM vs Social/Behavioral/Economic Sciences vs Arts & Humanities

- those with discussion sections vs those with labs

- which UC San Diego Division they fit in: Biological Sciences, Physical Sciences, Engineering, Social Sciences, Arts & Humanities, Medicine, etc.

- (I forgot to put class size on the cards – d’oh! – but that would be another way to sort them: small, medium, large, ridiculous enrolment)

I was expecting some of those “surface” sorts but my colleagues blew through those surface features and quickly re-sorted based on deeper features. Honestly, the categories they invented and the categories I made up ahead of time (in case they needed an example) are mixed up in my memory but here are some deeper features (analogous to “color of superhero costume” and “superhero publisher”)

- technology enhanced

- amount of active learning in typical classes

- computationally-focused

- amount of close reading required

- use statistics

- amount of writing required

Well?

We took 5-10 minutes to sort and then another 10 minutes to report out. Sure, I went over my 10-minute slot but the schedule was very flexible (by design).

I think the activity went great. It gave participants, many of whom were strangers to each other, an opportunity to share their backgrounds and expertise with each other. It revealed the breadth of knowledge in the room. And it gave everyone involved a reminder to look past the surface features of our meeting and project – who will be responsible for this or that, how many offices will be required, what budget will this come from – and look at the big picture: supporting learning.

Details about implementation

(These details are mostly for me so I’ll remember what to do next time. If you’re thinking about running a card-sorting activity, you might find them helpful, too.)

- I started with a spreadsheet to help me select sufficient courses that covered the surface and deeper features I wanted. I printed it out and had it with me during the activity so I could remember why I’d included the courses and what I anticipated as surface / deeper features.

- I wrote the course descriptions in Word as 2″ x 4″ labels, printed the labels, and stuck them to index cards. This made it easy to create as many stacks as I needed:

- What do you notice about the stacks? Right, the missing corners. Each stack has a different missing corner so I can easily reset the cards into stacks. Can you imagine the tedious task of sorting 8 x 9 = 72 virtually identical cards into stacks? No, thank-you!

- There were 2 main camps of people at the retreat, plus a number of important “third parties.” As I began the activity, I formed groups of 2-3 with at least one person from each camp.

- I used some old fridge magnets to make 9 magnets, one for each course. When the groups reported out, I quickly arranged the magnets on a handy whiteboard so I could hold it up for the others in the room to see: